Radiochemistry, a branch of chemistry that focuses on radioactive substances, has significantly shaped our understanding of atomic science and the universe. From its inception in the late 19th century to its current applications in medicine, energy, and environmental science, radiochemistry has undergone profound transformations. This article explores the pivotal discoveries, influential scientists, and technological advancements that have marked the history of radiochemistry. We begin with the early discoveries of radioactivity, move through the development of nuclear chemistry during the World Wars, and conclude with contemporary applications and future prospects.

Early Discoveries and Foundations

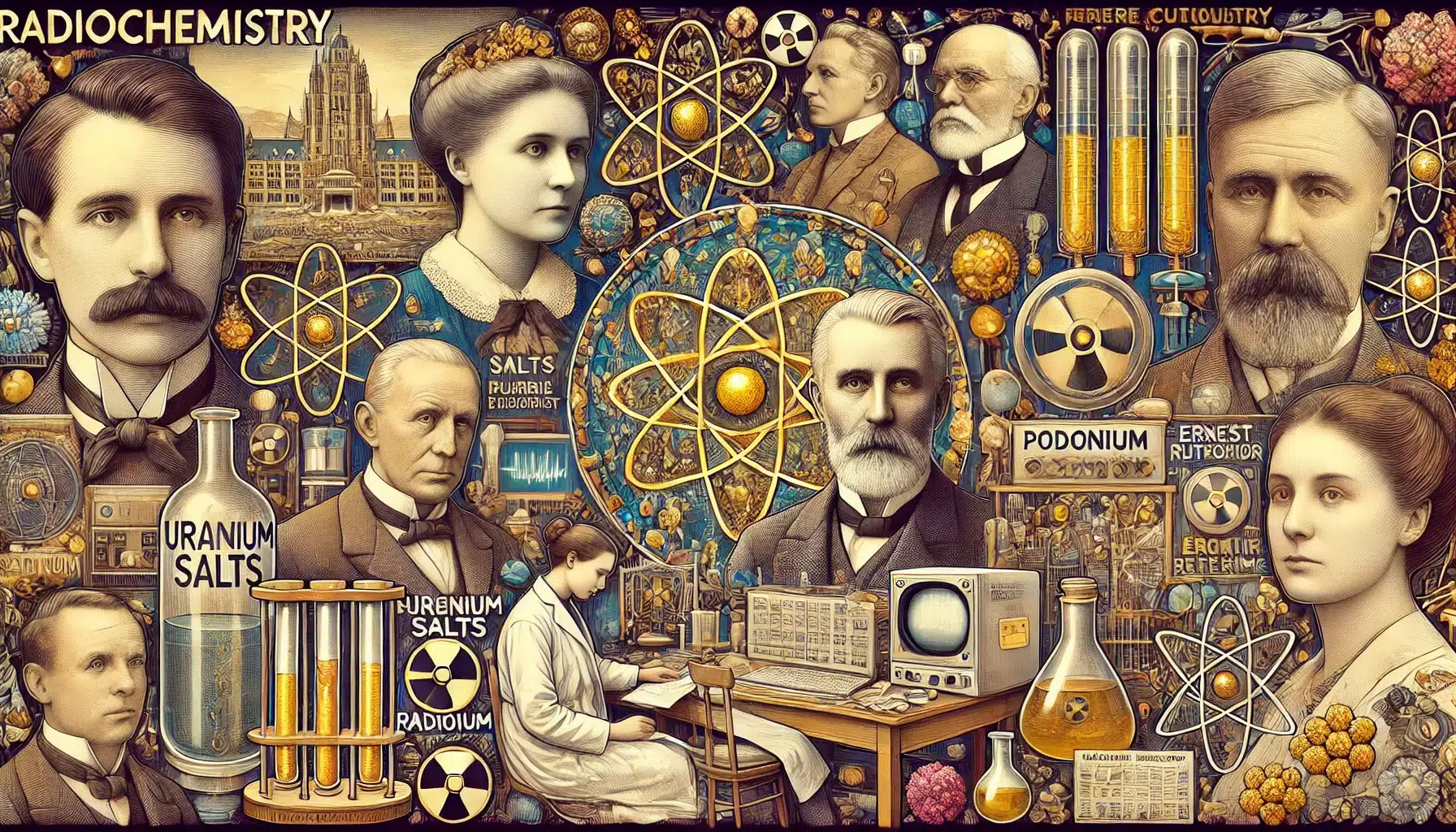

The history of radiochemistry is intertwined with the discovery of radioactivity itself. In 1896, Henri Becquerel, a French physicist, accidentally discovered that uranium salts emitted rays that could fog photographic plates, similar to X-rays. This observation marked the birth of radiochemistry. Becquerel’s work was soon expanded upon by Marie and Pierre Curie, who isolated two new radioactive elements, polonium and radium, from uranium ores. The Curies’ pioneering research earned them the Nobel Prize in Physics in 1903, shared with Becquerel, and later, Marie Curie won a second Nobel Prize in Chemistry in 1911 for her contributions to radioactivity.

The discovery of radium and polonium not only introduced new elements but also revealed the phenomenon of radioactive decay, where unstable atomic nuclei transform into stable ones by emitting radiation. This transformation highlighted the potential of radiochemistry in probing the structure of matter at a fundamental level.

Development of Theoretical Foundations

The early 20th century saw significant advancements in the theoretical understanding of radioactive decay. Ernest Rutherford, a New Zealand-born physicist, played a crucial role in this development. Rutherford’s experiments led to the discovery of the atomic nucleus and the differentiation between alpha and beta radiation. In 1902, Rutherford and Frederick Soddy formulated the theory of radioactive decay, explaining how elements transmute into other elements through the emission of alpha and beta particles. This theory was a cornerstone in the development of nuclear chemistry.

Rutherford’s work culminated in the famous gold foil experiment, which demonstrated that atoms consist of a dense nucleus surrounded by a cloud of electrons. This discovery was instrumental in developing the planetary model of the atom, laying the groundwork for modern nuclear physics and chemistry.

Radiochemistry in the World Wars

The two World Wars catalysed significant advancements in the history of radiochemistry. During World War I, radium was used in luminous paints for watch dials and instrument panels. However, it was during World War II that radiochemistry made its most dramatic impact on the development of nuclear weapons.

The Manhattan Project, a secret US-led initiative, aimed to develop the first atomic bomb. Key figures such as Glenn T. Seaborg and Robert Oppenheimer were instrumental in this effort. Seaborg’s work in isolating plutonium and his contributions to understanding transuranium elements were pivotal. In 1945, the first atomic bombs were detonated over Hiroshima and Nagasaki, bringing an end to the war and demonstrating the destructive potential of nuclear fission.

Post-War Developments and the Atomic Age

The end of World War II marked the beginning of the Atomic Age, characterised by the widespread application of nuclear technology. Radiochemistry played a vital role in the development of nuclear power for electricity generation. The first nuclear power plant in Obninsk, USSR, began operations in 1954. Numerous nuclear power plants were established worldwide, providing a significant portion of the world’s electricity.

Radiochemistry found applications in medicine in addition to energy production. The use of radioactive isotopes in medical imaging and cancer treatment became widespread. Technetium-99m, a gamma-emitting isotope, became a cornerstone in diagnostic imaging, while iodine-131 was used in the treatment of thyroid disorders.

Environmental and Safety Concerns

As nuclear technology proliferated, concerns about its environmental and health impacts grew. The 1950s and 1960s saw several nuclear accidents, including the Windscale fire in the UK (1957) and the Kyshtym disaster in the USSR (1957). These incidents highlighted the dangers of nuclear technology and the need for stringent safety protocols.

The most significant nuclear disaster occurred in 1986 at the Chernobyl Nuclear Power Plant in Ukraine. The explosion and subsequent fire released large amounts of radioactive material into the environment, causing widespread contamination and long-term health effects. This disaster led to a reevaluation of nuclear safety and spurred international cooperation in nuclear regulation and disaster response.

Modern Applications and Future Prospects

Today, radiochemistry continues to evolve, finding new applications in various fields. In medicine, radiopharmaceuticals are used for both diagnosis and therapy. Advances in imaging technologies, such as positron emission tomography (PET) and single-photon emission computed tomography (SPECT), rely on radiochemistry for accurate and non-invasive diagnostics.

Environmental radiochemistry is another growing field. Researchers study the behaviour of radionuclides in the environment to understand their impact and develop remediation strategies. This includes monitoring radioactive contamination in water, soil, and air and studying the long-term effects of radioactive waste disposal.

Nuclear forensics, a relatively new discipline, uses radiochemical techniques to trace the origin and history of nuclear materials. This field is crucial for national security and non-proliferation efforts, helping to prevent the illicit trafficking of nuclear materials and identifying the sources of radiological incidents.

Challenges and Ethical Considerations

The advancement of radiochemistry is not without challenges and ethical considerations. The management of radioactive waste remains a significant issue. High-level waste, which remains hazardous for thousands of years, poses long-term storage and containment challenges. Ensuring the safe disposal of this waste is critical to protecting human health and the environment.

Ethical considerations also arise in the use of nuclear technology. The development and use of nuclear weapons have profound moral implications. The potential for catastrophic accidents, as demonstrated by Chernobyl and Fukushima, raises questions about the continued use of nuclear power. Balancing the benefits of nuclear technology with its risks is a complex ethical dilemma.

Education and Research

Education and research are fundamental to the continued advancement of the history of radiochemistry. Universities and research institutions worldwide offer specialised programmes in nuclear chemistry and radiochemistry, training the next generation of scientists and engineers. Research in radiochemistry is supported by national and international organisations, such as the International Atomic Energy Agency (IAEA) and the American Chemical Society (ACS).

Recent research in radiochemistry includes the development of new radiopharmaceuticals, advanced nuclear fuel cycles, and improved methods for radioactive waste management. Collaborative efforts, such as international research projects and conferences, facilitate the exchange of knowledge and innovation in the field.

Conclusion

Radiochemistry has a rich and complex history, marked by groundbreaking discoveries, technological advancements, and significant challenges. From the early discovery of radioactivity to the development of nuclear weapons and the peaceful applications of nuclear technology, radiochemistry has profoundly impacted science, medicine, and society. As we move forward, the continued advancement of radiochemistry will depend on balancing the benefits of nuclear technology with its ethical and environmental implications. By fostering education, research, and international cooperation, we can ensure radiochemistry’s safe and beneficial use for future generations.

Disclaimer

This article, A Brief History of Radiochemistry from Discovery to Modern Applications, is published by Open Medscience for informational and educational purposes only. While every effort has been made to ensure the accuracy and reliability of the content, Open Medscience does not guarantee the completeness or correctness of the information provided. The article reflects a general overview and historical account of radiochemistry and is not intended to substitute for professional advice or expert consultation in scientific, medical, or regulatory contexts.

References to individuals, institutions, or events are based on publicly available information and are included for illustrative purposes. Open Medscience assumes no responsibility for any actions taken based on the content of this article. Readers are encouraged to consult appropriate professionals or authoritative sources when making decisions related to nuclear science, radiochemistry, or associated fields.

The views expressed in this article are those of the author(s) and do not necessarily represent the views of Open Medscience, its affiliates, or any associated organisations.